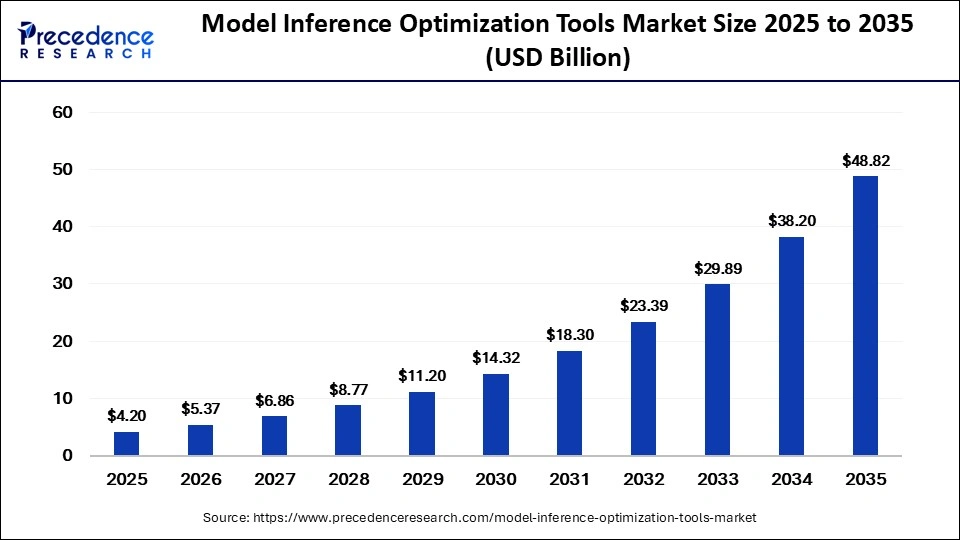

The global model inference optimization tools market is projected to reach USD 48.82 billion by 2035 at a CAGR of 27.8%. Explore AI inference trends, edge AI growth, optimization techniques, regional insights, and future opportunities.

Read Also: Data Center Cable Market

Model Inference Optimization Tools Market: Accelerating the Future of Scalable Artificial Intelligence

Artificial intelligence is rapidly evolving from experimental technology into a foundational component of modern enterprise infrastructure. However, as organizations deploy increasingly complex AI systems—especially generative AI and large language models (LLMs)—the computational burden of inference is becoming one of the industry’s biggest operational challenges.

Inference is the stage where trained AI models generate outputs in real time. As enterprises scale AI applications across cloud infrastructure, mobile devices, autonomous systems, and edge computing environments, demand is rising for faster, lower-latency, and more energy-efficient AI execution.

This growing challenge is accelerating adoption of model inference optimization tools, specialized software solutions designed to improve AI inference speed, throughput, hardware utilization, scalability, and operational efficiency.

With generative AI adoption surging globally, the model inference optimization tools market is becoming a critical pillar of next-generation AI infrastructure.

Market Overview: Strong Growth Fueled by Generative AI Expansion

The global model inference optimization tools market was valued at USD 4.20 billion in 2025 and is projected to grow from USD 5.37 billion in 2026 to approximately USD 48.82 billion by 2035, expanding at a remarkable CAGR of 27.80% during the forecast period.

The market’s rapid growth is being driven by:

- Rising deployment of large language models

- Expansion of edge AI applications

- Increasing demand for low-latency AI systems

- Rising cloud AI infrastructure costs

- Growing adoption of AI accelerators and GPUs

- Demand for energy-efficient inference infrastructure

As organizations seek scalable and cost-effective AI deployment strategies, inference optimization technologies are becoming increasingly essential across industries.

Understanding Model Inference Optimization Tools

Model inference optimization tools are software platforms that improve the execution efficiency of trained AI models during production deployment.

These tools optimize:

- Inference latency

- Throughput

- Memory utilization

- Energy efficiency

- Hardware acceleration

- Model compression

- Runtime execution

They are widely used across:

- Cloud AI infrastructure

- Edge devices

- Autonomous systems

- Industrial AI platforms

- Mobile AI applications

- Financial analytics systems

- Healthcare AI solutions

Optimization technologies help organizations reduce computational costs while maintaining AI performance and scalability.

Key Market Trends

1. Generative AI and Large Language Models Driving Massive Demand

The explosive growth of generative AI is significantly increasing demand for inference optimization technologies.

Modern transformer-based AI models require enormous computational resources during inference. Organizations are increasingly deploying optimization tools to:

- Reduce GPU usage

- Improve token generation speed

- Lower inference latency

- Optimize memory efficiency

- Reduce cloud infrastructure costs

As AI copilots, multimodal systems, and enterprise AI assistants become mainstream, inference optimization is becoming a core operational requirement.

2. Expansion of Edge AI Deployment

The rapid growth of edge AI is transforming the optimization landscape.

Organizations increasingly deploy AI models on:

- Smartphones

- IoT devices

- Autonomous vehicles

- Industrial robots

- Smart surveillance systems

- Healthcare monitoring devices

Edge AI optimization tools accounted for approximately 20% market share in 2025 and are projected to grow at the fastest CAGR during the forecast period.

These tools help:

- Reduce latency

- Lower power consumption

- Improve local processing

- Minimize bandwidth dependency

Edge computing continues to emerge as one of the market’s strongest growth drivers.

3. Quantization Becoming the Dominant Optimization Technique

Quantization dominated the market with approximately 30% share in 2025 and is expected to maintain leadership throughout the forecast period.

Quantization reduces computational requirements by converting AI models into lower-precision formats such as:

- INT8

- FP16

- FP4

This approach significantly improves:

- Inference speed

- Memory efficiency

- Energy optimization

while maintaining acceptable model accuracy levels.

Low-bit quantization is becoming increasingly important for deploying large AI models efficiently across constrained environments.

4. Hardware-Aware Optimization Emerging as a Strategic Priority

Inference optimization platforms are increasingly designed around specialized AI hardware architectures.

Optimization tools now closely integrate with:

- GPUs

- TPUs

- NPUs

- FPGA accelerators

- Custom AI chips

Hardware-aware optimization improves execution efficiency by tailoring model performance to underlying hardware capabilities.

Google Cloud’s latest TPU 8i architecture highlights how AI inference infrastructure is evolving toward low-latency and energy-efficient optimization at hyperscale levels.

5. Rising Focus on Energy-Efficient AI Infrastructure

AI inference workloads are dramatically increasing global data center energy consumption.

Organizations are increasingly deploying optimization tools to:

- Reduce power consumption

- Improve throughput-per-watt efficiency

- Lower cooling costs

- Improve sustainable AI deployment

Energy-efficient inference infrastructure is becoming a major strategic priority across the AI ecosystem.

Companies developing inference-specific AI accelerators are increasingly competing on performance-per-watt efficiency rather than raw compute power alone.

Market Dynamics

Market Drivers

Rapid Growth of Large-Scale AI Models

The increasing complexity of modern AI models is significantly driving demand for inference optimization technologies.

Large foundation models require:

- Massive computational resources

- Low-latency execution

- Efficient memory utilization

- Scalable deployment infrastructure

Optimization tools help enterprises deploy large-scale AI applications more efficiently while reducing infrastructure costs.

Demand for Real-Time AI Applications

Applications such as:

- Autonomous driving

- Industrial automation

- AI chatbots

- Smart surveillance

- Medical diagnostics

- Financial trading systems

require ultra-fast inference processing.

Optimization technologies are becoming essential for supporting real-time AI responsiveness across latency-sensitive environments.

Expansion of AI Cloud Infrastructure

Cloud service providers are increasingly scaling AI inference services globally.

Cloud-based optimization dominated the market with approximately 55% market share in 2025.

Optimization tools help cloud providers:

- Improve GPU utilization

- Reduce infrastructure waste

- Lower inference costs

- Improve service scalability

Advancements in AI Accelerators

The rapid evolution of AI chips and accelerators is creating new opportunities for software optimization platforms.

Companies increasingly require optimization systems capable of supporting:

- Heterogeneous hardware environments

- Multi-cloud AI deployment

- AI accelerator orchestration

Market Challenges

High Computational Costs

Despite optimization advances, AI inference continues to require significant computational resources.

Organizations face challenges related to:

- GPU shortages

- Infrastructure costs

- High energy consumption

- Hardware scalability limitations

High compute costs remain a major barrier for many enterprises.

Hardware Compatibility Limitations

Not all optimization techniques are fully compatible across AI hardware architectures.

Certain advanced optimization methods such as quantization may not work efficiently on older GPUs and legacy systems.

Complexity of Large-Scale AI Deployment

Deploying optimized AI systems across:

- Cloud environments

- Edge infrastructure

- Hybrid architectures

requires highly specialized expertise in:

- AI infrastructure

- Distributed computing

- GPU optimization

- Compiler technologies

The global shortage of AI infrastructure specialists remains a key industry challenge.

Regional Insights

North America – Dominant Region

North America led the market with approximately 42% share in 2025.

The region benefits from:

- Advanced AI ecosystem

- Presence of hyperscale cloud providers

- Strong semiconductor industry

- Large enterprise AI investments

The United States remains the global leader in AI inference infrastructure innovation.

Asia Pacific – Fastest Growing Region

Asia Pacific is projected to grow at the fastest CAGR during the forecast period.

Growth Drivers Include:

- Expansion of AI startups

- Government AI initiatives

- Increasing cloud infrastructure investments

- Rapid digital transformation

Countries such as China, India, Japan, and South Korea are leading regional expansion.

Europe

Europe continues to experience strong growth supported by:

- Enterprise AI adoption

- Edge computing expansion

- AI regulatory initiatives

- Sustainability-focused infrastructure investments

Competitive Landscape

The model inference optimization tools market is becoming increasingly competitive as:

- Cloud providers

- Semiconductor companies

- AI startups

- Infrastructure software vendors

expand their optimization ecosystems.

Major industry participants include:

- NVIDIA

- AWS

- Google Cloud

- Microsoft

- AMD

- Intel

- Qualcomm

- Hugging Face

- Cerebras Systems

- Together AI

- Fireworks AI

Companies are focusing on:

- AI accelerator optimization

- Edge AI deployment

- Low-latency inference

- Multi-cloud orchestration

- Sustainable AI infrastructure

Strategic partnerships between AI model developers and hardware vendors are accelerating innovation across the ecosystem.

Future Outlook: Toward Autonomous AI Infrastructure

The future of AI deployment will increasingly depend on advanced inference optimization systems.

Key Future Trends

- Autonomous AI infrastructure orchestration

- Real-time multimodal inference

- AI-native optimization platforms

- Energy-aware AI deployment

- Edge-native inference acceleration

- Hardware-software co-optimization ecosystems

As AI models continue expanding in complexity and scale, optimization tools will become indispensable components of global AI infrastructure.

Conclusion

The model inference optimization tools market is rapidly emerging as one of the most critical pillars of the modern AI ecosystem. As enterprises increasingly deploy AI across cloud, edge, and real-time environments, efficient inference optimization is becoming essential for scalable, cost-effective, and sustainable AI adoption.

Get a Sample Copy: https://www.precedenceresearch.com/sample/8383

For inquiries regarding discounts, bulk purchases, or customization requests, please contact us at sales@precedenceresearch.com